Storage Optimization Techniques

Storage optimization techniques align data practices with business goals to balance cost and performance. Data lifecycle strategies, including tiering, deduplication, and compression, reduce footprint while preserving access. Workload-driven design, with IOPS profiling and latency targets, informs scalable architecture. Automation, monitoring, and archival policies sustain ongoing gains and risk controls. The framework is data-driven and repeatable, but the next step—how to quantify impact—remains essential to unlock further efficiency.

What Is Storage Optimization Really About

Storage optimization is the practice of aligning data storage practices with business goals to maximize efficiency, reduce costs, and improve performance. It centers on measurable outcomes: data retention guidelines, cost visibility, and scalable governance. By quantifying storage needs and continuously monitoring usage, organizations enable informed decisions, optimize resource allocation, and sustain performance while preserving freedom to innovate.

Data Lifecycle: Tiering, Deduplication, and Compression

Data lifecycle management hinges on strategically selected tiering, deduplication, and compression techniques to balance cost and performance. The approach views data as scalable assets, moving content between tiers based on access patterns, latency tolerance, and value. Deduplication eliminates redundant data, while compression reduces footprint. Tiering supports agile storage economics, with cold storage reserved for rarely accessed archives and compliance.

Workload Evaluation and Architecture Choices

Workload evaluation informs architecture choices by translating access patterns, latency requirements, and value metrics into concrete design decisions. The approach emphasizes data-driven assessments, scalable configurations, and efficiency gains.

Architecture selections balance capacity budgeting with performance targets, aligning storage tiers to workload variability. IOPS tuning and workload profiling guide resource allocation, ensuring predictable latency, improved throughput, and cost-effective growth without compromising freedom to adapt.

Proactive Policies: Automation, Monitoring, and Archival Strategies

Proactive policies enable continuous optimization through automation, monitoring, and archival strategies that align with service-level targets and cost constraints.

The approach emphasizes automation governance to standardize actions, reduce manual drift, and accelerate response times.

Data-driven dashboards quantify efficiency gains, while archival analytics guide tiering decisions.

Scalable workflows sustain freedom-driven operations, enabling proactive risk management, cost containment, and consistent service delivery at scale.

See also: windowsterminal

Frequently Asked Questions

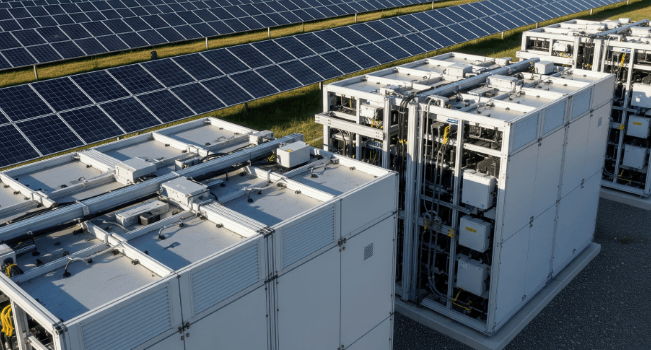

How Do Storage Options Impact Energy Consumption and Cooling Costs?

Storage energy and cooling costs rise with denser, less efficient configurations; scalable options optimize power use, reduce heat output, and enable smarter workloads. Efficiency-focused designs lower energy, cooling demands, and operational costs, appealing to freedom-seeking, data-driven stakeholders.

What Are the Hidden Costs Of-Tiering and Data Movement?

“Beware the long tail.” The report identifies hidden costs in tiering and data movement, quantifying storage optimization tradeoffs and archival workflows. It emphasizes data-driven, scalable efficiency, noting how hidden costs and data movement affect freedom-oriented infrastructure decisions.

Can AI Improve Forecasting for Capacity Planning Accuracy?

AI forecasting improves capacity planning accuracy by leveraging data-driven models; forecasts guide capacity optimization, enabling scalable efficiency. Forecast accuracy rises as patterns are captured, reducing waste and enabling freedom-oriented decision makers to trust predictive insights for resource allocation.

How Does Data Sovereignty Affect Optimization Strategies?

Data sovereignty shapes optimization strategies by enforcing regulatory compliance constraints, guiding where data resides and processes occur; this governance influences scalability, system efficiency, and risk management, aligning optimization with freedom-aware, data-driven architectures.

What Are the Security Risks of Automated Archival Workflows?

Automated archival workflows risk data integrity breaches and access controls misconfigurations, potentially enabling unauthorized retrieval or tampering. They demand robust auditing, immutable provenance, strict role-based access, and scalable monitoring to sustain secure, freedom-oriented data operations.

Conclusion

Storage optimization centers on measurable efficiency gains through lifecycle management, data reduction, and workload-aware design. By quantifying needs, applying tiering, deduplication, and compression, and automating governance, organizations attain scalable, cost-visible performance. A hypothetical retailer reduced primary storage by 40% after implementing tiered archives and proactive analytics, while preserving latency targets through workload profiling. The result: data-finance alignment, predictable costs, and agile capacity planning. In this data-driven approach, continuous monitoring and archival strategies sustain sustained efficiency and risk-managed growth.